Data strategy in progress

Setting out our approach in the open

This blog post is about the data strategy work that we are doing at Citizens Advice.

It’s not a data strategy as such. Or maybe it is?

I’ve presented this material many times and now I am writing it down so we have a starting point for talking in the open about how we’re getting on.

There are a few things that I hope you take away from this post. First is that a strategy isn’t a document. Second is that you need to think critically about the context and situation you find yourself in in order to work out how you can make a positive difference. Third is that you need to make sure your data strategy work has a broad appeal that brings people along with you.

You have to create something that reflects culture and language that people recognise even though you are aiming for change. I don’t believe that you can transplant a data strategy wholesale from one organisation onto another.

There are three main elements to our data strategy work:

- Questions to answer

- Delivery

- Themes and principles

I will explain these elements below after providing our context.

Context

There are four main things that have informed our approach to data strategy work.

The first is that our organisation-wide strategy ‘Future of Advice’ is coming to an end. It was extended for a year due to the pandemic. We know that Citizens Advice will be developing a new organisational strategy. There is a risk that we could launch a data strategy that wasn’t aligned to what the organisation will be trying to achieve. I don’t want to do data strategy work as an end in itself, I want to make sure we’re making a contribution that’s moving us in the right collective direction for the organisation and the people that we help.

The second bit of context is that we have a current organisational priority which is ‘meeting more demand’. Demand for our services is high and rising due to circumstances in society. We need to ensure a focus on client outcomes as well — it’s not just about volume. We can apply a ‘meeting more demand’ lens to all of our data strategy work. It’s helpful.

The third bit of context is what we have learned as a result of the pandemic. The initial crisis response from Citizens Advice in spring 2020 necessitated working with our data in ways we hadn’t done consistently before. We developed and iterated new products, we instituted new practices (particularly regular open conversations about our data), and we came to understand the strengths and weaknesses of our evidence base in new ways.

That work hasn’t stopped, it’s been continuously improving and developing. The patterns of demand for advice are changing and the ways in which we deliver it are changed. We need to continue to build on this experience. We need to maintain a continuously curious improvement of our understanding of what is happening.

The final bit of context is about capacity. The Data Science team is leading our data strategy work, and there are 12 of us. The National organisation that we work in has around 700 staff, and then we have our Network of 260+ independent local Citizens Advice across England and Wales. Don’t get me wrong I know that for a charity we’ve got a generous complement of data specialists. But we can only achieve so much and mustn’t spread ourselves too thin.

So this context informs how we prioritise the data strategy work, and also our collaborative approach where we work with others to deliver data improvement.

This context means that I expect this framework to last for 12 to 18 months. [UPDATE 2022–04–06]

Our aim is to improve our data capability across the organisation. This will prepare us for a new organisational strategy. We will combine incremental and iterative improvements with larger pieces of work. We will prioritise based on greatest areas of need and opportunity. We will build on existing good practice and be unfailingly collaborative in our approach. And finally we will be able to measure and demonstrate our progress.

Questions to answer

This is my favourite bit of our data strategy work.

There are questions about our service and our clients that we can’t currently answer at all, or with sufficient confidence, or that take disproportionate time and effort to deliver.

So we are focusing our data improvement efforts on ‘questions to answer’, particularly to support helping our clients.

Here are some examples:

“How many times do people call us on the telephone before getting through?”

“What do we know about the differences in ways of working between paid staff and volunteers?”

“How many people visit our website before calling us on the telephone?”

Framing data improvement in terms of questions to answer is a good practice. It avoids focusing on individual systems, or abstract architecture and data design.

It democratises data by reducing barriers to entry and understanding. It requires contribution from a wide and diverse group in order to work well. Framing good questions is a skill in itself, but that’s something data specialists (and other research and design disciplines) can help with.

I think the questions to answer framing is especially motivating because the data at Citizens Advice is rich and immediate — my colleagues tell great stories with our data.

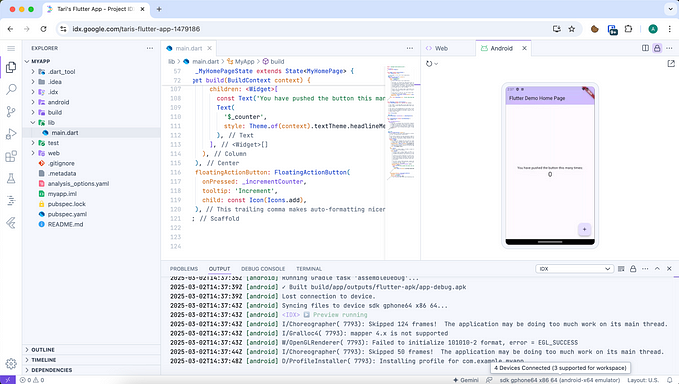

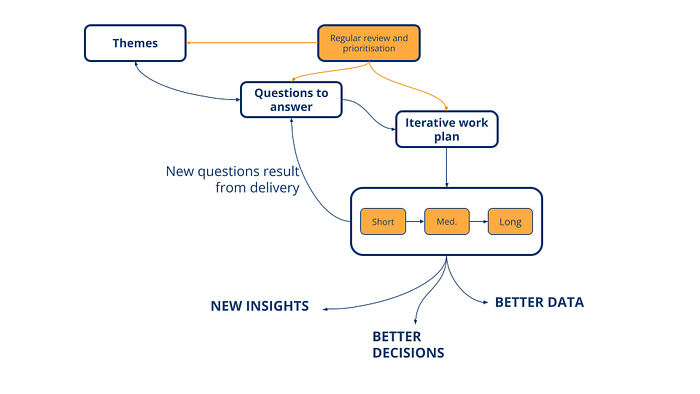

Reflecting on where we’re at with this questions piece, a few months ago I thought we’d have a single backlog to work from. I had a high-level process in mind like this:

However, going back to the capacity point earlier there’s too many potential questions and too many people asking them to be able to manage this in one place. I’m not sure it would be valuable to do so anyway.

We do have backlogs of questions to answer and prioritisation of them at team level in several places, and this is something to build on.

We also have a rolling programme of weekly data updates to the Executive and Directors team which James leads on. This is with input from the Data Science team and our counterparts in the Impact team led by Tom. These updates also get shared with all National staff via Workplace, and we plan to start sharing with the Network¹ soon too. These data updates have driven some of the most forward-thinking and fresh data work that I’ve been involved in since I started this role in late 2019. We know things that we didn’t know 6 months ago, which is itself a measure of improvement.

This practice of data updates is evolving, involving more people from across the organisation. We see the regular updates in other forums too — for example I often do one to the leadership team I’m in at our weekly meeting. And we continue to talk about our data at regular sessions open to all National staff.

The evolution of the Executive and Directors data update points to an opportunity for a new forum to discuss performance focused on client outcomes in more depth. I think this is a good result, because through practice we’ve illustrated some potential new governance, rather than designing it up front.

The questions to answer illustrate areas where we can make longer term improvements to our data — for example introducing new data sets. There have been a few of these implemented in recent months — new volunteer data, new insight on website impact, and more detailed understanding of various telephony models. So, the questions to answer framing really does enable a virtuous cycle of improvement in the short, medium, and longer term.

Fundamentally this part of our data strategy work is about culture. I know that we’re moving in the right direction when I see other people from across the organisation framing their data needs as questions to answer.

Finally let’s remember that we don’t do this data work to come up with some interesting stories. We want to enable better decisions and action being taken. So a useful follow up to “what questions do you want to answer with the data?” is “and what will you do differently as a result?”

Delivery

As a Data Science team we have 5 priority items for delivery in the next financial year. They are:

- Network data

- Blended working

- Taxonomy alignment

- Open data

- Contribution to the Data Platform

We’ve developed these using the context that I set out at the beginning. Every item involves collaboration with other teams. As with the questions to answer it is evolving but this is where we are at this point in time. We’ve started all of these items as well.

I’ll explain them in some more detail:

Network data

The list of items isn’t in priority order, but I’d say this is the biggest one.

The outcome we will achieve here is a significant improvement in our data about our Network of 260+ local Citizens Advice. We have an opportunity to introduce some ‘single sources of truth’ and develop new data products that will deliver new insight to the teams that need them, along with being simpler to maintain and improve.

We’ll be using our data architecture, analysis, and engineering expertise for this piece.

Blended working

This is a continuation of work that we’ve been doing for a while.

The outcome we will achieve here is a collaborative approach to data work by default. We want to move away from a ‘client / supplier’ mode of working altogether. We’ve got established examples of this working well with our colleagues in the Impact team working with Policy. We’ve also made great progress with some of our Operations colleagues, particularly on telephone delivery.

We’ll be working in earnest with two of the most capable ‘data adjacent’ groups in Product and Content. I would like to get to the point where we can make a strong case for having the capacity to have more data specialists embedded in multidisciplinary teams.

This is a whole team effort in terms of the expertise we’ll be using.

Taxonomy alignment

Put as simply as I can, this is about being able to compare two or more sets of things that are the same (or similar) but have different names. This is a common data problem, not unique to Citizens Advice. Often the reasons for having lists of names that are different are valid.

The outcome we will achieve is making it easier to do analysis of activity and topic trends across channels and services. For example our website content and our case work data.

We’ll be using data architecture and data science expertise in particular for this piece.

Open data

At Citizens Advice we publish Advice Trends which is a public Tableau dashboard. We don’t publish raw data. I think doing so will open up lots of opportunities for collaboration. Working with a wider variety of data users will also help us to understand how to increase the utility of our data at source.

The outcome we will achieve is to build a community of users around our data, increasing its reach and value.

I’ll be leading on this piece, but I won’t be keeping it to myself — I want to make sure we can bring in extra capacity so that everybody in the team can participate.

Contribution to the Data Platform

The first workstream in the Citizens Advice Product Strategy is ‘build measurement capability’. As part of this workstream the product team developing our data platform are

build[ing] on the foundational infrastructure work they have already delivered, bringing in further datasets from different advice channels.

The Data Science team are in a different position than we were 18 months ago, particularly in terms of our collective technical skill and our overall capacity. We will be making an active contribution to the data platform work, particularly thinking about the tools and environments we need to do data science work at production scale. This piece is very similar to the ‘Blended working’ piece, but the difference is that we benefit as a team from the Data Platform’s success.

We’ll be contributing to the intended outcomes from the Product Strategy here.

This is a whole team effort in terms of the expertise we’ll be using.

Themes and principles

The ‘Delivery’ section is focused primarily on what the Data Science team will be doing. However, this strategy work is for the benefit of the whole organisation. I want it to cover the full range of our data and to be accessible to everybody.

To this end we are developing a lightweight framework of themes and principles. We apply these to all the work I’ve described so far. To get to the lists below we developed them in a collaborative way, inviting views from a wide variety of colleagues and iterated them based on feedback.

These themes and principles give us the opportunity to help improve data work elsewhere in the organisation by empowering teams and groups to take responsibility for their development and putting them into practice. They are still a work in progress, and reading them back some of the thinking and discussion that went into them has been obscured. In particular our considerations of data ethics, which is something we want to introduce as standard practice. This has been lost under principles 1, 6, and 7. [UPDATE 2022–04–06]

These themes and principles also enable work on data standards, and introducing other ways to assess our progress and level of data maturity. I’ll cover these briefly too.

Themes

We have seven high level themes:

- Understand so that we focus on users’ needs

- Describe so that we can be consistent

- Develop so that we continuously deliver value

- Measure so that we can make decisions

- Analyse so that we can answer questions

- Own so that we are responsible for quality

- Protect so that we are responsible for security

Principles

Under each theme we have a few specific principles. We can use these to hold ourselves to account — for any given piece of work are we doing all of these things? Here are a couple of examples:

- Describe so that we can be consistent

- Model our data and maintain those models

- Design data for reuse

- Make data easy to find

- Keep barriers to entry low

- Advocate for our data

- Analyse so that we can answer questions

- Use the best tool for the task

- Facilitate self-service

- Doesn’t have to be perfect

- Show your method

- Use peer review

- Understand any limitations of the data

I will write about the full list of themes and their development in a future blog post. [UPDATE 2022–04–06]

Standards

Data standards can be expensive to implement and maintain. Therefore they have to deliver value. So far our standards work is about defining our own informed by good practice elsewhere rather than adopting external standards wholesale. We use the context we are working in to prioritise our efforts here.

Examples of standards we are working on include documentation, demographic data categories, templates for reporting, and data visualisation.

Data maturity

We categorise our work using a set of labels based on this data advantage matrix. This helps us to measure our progress and areas of strength or things that need development. We are also applying this approach to more specific areas of maturity, such as data science.

So that’s where we’re at. I don’t have a big conclusion but thank you for reading if you made it this far! Please get in touch if you’d like to discuss.

I have transposed this blog post with a few edits into a public Google Doc which we will use to support our progress. [UPDATE 2022–04–06]

Footnotes

¹ Recognising here that there are different data users’ needs in the National organisation and the Network. Although this is healthy in itself, because my view is that we need to go further beyond headline and aggregate measures in our analyses and that should appeal to the Network.